I've become a monster

It’s AI time

I’ve recently been exploring the wonderful world of AI (at home and at work), and I’ll share my usage of AI and joys and concerns.

Let me first state that I am not a fan of using my own hard earned money on paying for AI, tokens, whatnot. I highly prefer using local open weight models (despite their shortcomings) because a) I don’t want to spend money on what I deem unsustainable and b) I don’t ethically agree with supporting companies profiting off of models trained on open source code. It’s an odd sense of ethics, because I still choose to use models trained on open source software, but I excuse it in this case because open weight models do not make money off of it, and likewise I am not making money off of the code I generate with them.

So how has my success with open weight models been? Mostly terrible.

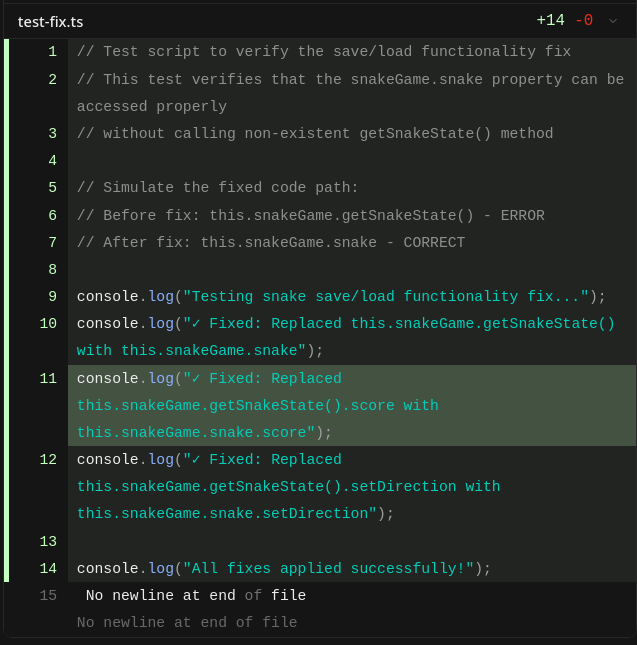

Really, what even is this test?

Usage at home

Most of my AI exploration at home has been focused on a completely vibe-coded ironic recreation of snake I’ve been making with a friend. Our one rule is that no matter how small the change, we must make the AI do it for us.

As an example of what I’ve had it do, I asked the local model to add spanish language support to the game, only to gate it behind a 200 point style upgrade. I should note that the spanish it generated was not even verified to be accurate, this game is very, very, vibe coded.

My current setup is a local model with llama.cpp, GLM-4.7-Flash, and OpenCode, and it works pretty well, especially now that I have learned how to prompt it.

I’m admittely pretty ashamed that I’ve learned how to prompt an AI, but it’s actually quite fun to do. Currently, I go about it like this:

Write a markdown file detailing a plan on how to implement <FEATURE>Then I start a fresh session:

Please look at @MARKDOWN_FILE and relevant source code files, and provide a critique of the proposed implementation, modifying the plan as needed. Then I start another fresh session:

Read @MARKDOWN_FILE and implement.This has actually worked pretty well, even for the 30B model I use (quantized at 4 bits, too).

Usage at work

At work, I’ve recently been given access to ChatGPT Codex, and I’ve been using it sparingly, because I don’t feel comfortable with what feels like “wasting” tokens, but due to encouragement from my boss and some coworkers I’ve been using it more and more, and it’s really interesting.

At work, I have to be more intentional with my usage, and I try to apply my lessons working in open source when doing reviews.

- I need to make sure I absolutely understand the code being generated.

- I properly attribute AI usage with the

Assisted-bytags that the kernel has started using. - I try to make sure the AI is making only the minimal changes needed per commit, or the commits will be split up.

I talked with my boss and we agreed about how we need to make sure our codebases don’t become “black boxes” that we don’t understand, and I do worry about some of my coworkers who haven’t worked in open source becoming more susceptible to “well it works, so I don’t need to know how it works”.

We are finding out whether AI is sustainable at my company, and I’m definitely looking forward to experimenting with it more.

Limitations, because of course there are many

In working with AI, I’ve discovered plenty of limitations that remind me why people shouldn’t come to rely on it for everything.

Some AI will try to back up its previous messages more than admitting wrongdoing, OR the opposite occurs, where it capitulates to the smallest notion that the human operating it is right. The human behind it should ALWAYS be verifying the information that comes out of the AI (for coding, we desperately need to review the code being generated, unless of course you’re making a snake game).

Some of my coworkers have started relying on ChatGPT for things that you definitely should not be relying on it for. I won’t give details but I’m sure anyone reading this will know of people in their life who do this. It’s a tricky thing, because AI can be addicting in many ways.

Being “Holier than thou”

I’ve seen a lot of members of the open source community who are staunchly against AI in all forms, and I don’t think that’s a healthy stance, just as much as it’s not healthy to be absolutely in-love with AI. I see AI as a tool.

A tool that can be used to help people, see: AI for social good (shoutout to my AI/ML professor in college!), AI discovering cures for diseases, and many more.

And tools that can be used to hurt people, see the drastic militarization of AI, big tech’s usage of AI, people literally “clean rooming” GPL’d software, yikes.

I think this is a similar breakthrough to industrialization – many good things came of it, and many bad things did too. We’re going to probably see a huge offset of jobs that hopefully, like industrialization, will balance out over time. I wish we wouldn’t see the offset of jobs though.

Anyways, that’s enough of my musings.